Understanding Closed Source AI Models in Simple Terms

Modern artificial intelligence is evolving rapidly, and the large language model has become the foundation of many AI-driven applications. Businesses, developers, and researchers are using AI models, machine learning systems, and natural language processing tools to automate workflows, improve customer experience, and build intelligent software.

One of the most common questions people ask is

Which characteristic is common to closed-source large language model systems?

This guide explains the answer clearly while helping users understand practical differences between closed-source AI, open-source AI models, and enterprise-ready deployments—especially when running workloads on GPU cloud platforms like inhosted.ai.

What Characteristic Is Common to Closed-Source Large Language Models?

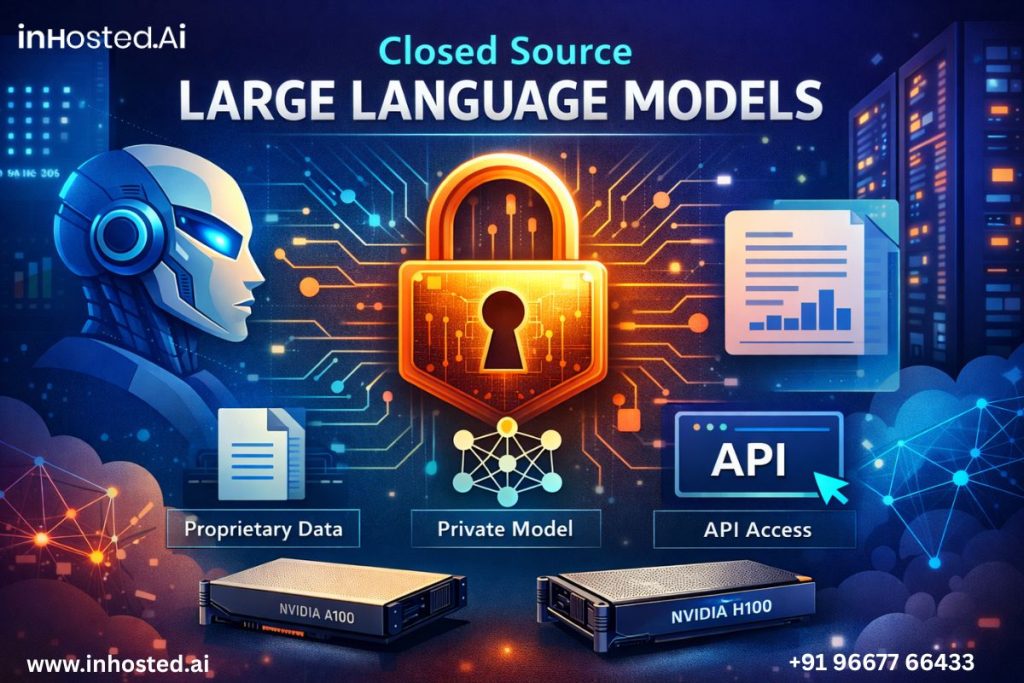

The defining characteristic shared by closed-source large language model systems is proprietary control.

This means:

- The model architecture is private

- Training datasets remain confidential

- Internal parameters and weights are not publicly accessible

- Access is provided through managed APIs or platforms

Users interact with outputs but cannot modify the underlying AI system.

This restricted access is the key factor that distinguishes closed-source AI from open-source alternatives.

What Is a Large Language Model?

A large language model is an advanced artificial intelligence system trained on massive text datasets to understand and generate human-like responses.

These models rely on:

- transformer neural networks

- deep learning techniques

- large-scale natural language processing

Common use cases include:

- AI chatbots

- content generation tools

- code assistants

- automated data analysis

- conversational interfaces

Because of their size, modern large language model systems require powerful computing resources, often powered by Nvidia A100 and Nvidia H100 GPUs.

What Does “Closed Source” Mean in AI?

In AI development, “closed source” refers to systems where:

- Source code is restricted

- Training pipelines are private

- Model weights are not downloadable

Instead of distributing the model directly, companies provide access through:

- API-based AI services

- managed AI platforms

- enterprise cloud environments

This approach helps ensure consistent performance and security.

Core Characteristics of Closed-Source Large Language Models

- Proprietary Architecture and Training Data

Closed-source large language model systems maintain ownership over their internal design.

Organizations invest heavily in:

- model training infrastructure

- curated datasets

- optimization techniques

Because these elements represent significant intellectual property, they remain confidential.

- API-Based Access

A key secondary trait of closed-source models is controlled access.

Users typically:

- send prompts through APIs

- receive generated responses

- rely on managed environments for scaling

This removes the need for organizations to maintain complex AI infrastructure internally.

- Performance Optimization Using GPU Infrastructure

Closed-source large language model providers optimize performance using enterprise GPU clusters.

These frequently include:

- Nvidia A100 GPUs

- Nvidia H100 accelerators

Such hardware enables:

- faster inference

- improved scalability

- lower latency for real-time AI applications

Deploying AI workloads on GPU cloud infrastructure like inhosted.ai allows businesses to leverage this performance without purchasing physical hardware.

- Built-In Safety and Governance Systems

Closed-source models often integrate:

- AI safety mechanisms

- bias mitigation systems

- usage moderation filters

- enterprise compliance controls

These built-in safeguards help maintain responsible AI usage.

- Limited Deep Customization

Unlike open-source alternatives, closed-source large language model platforms typically restrict deep-level modifications.

Customization happens through:

- prompt engineering

- API configurations

- limited fine-tuning options

This simplifies deployment for companies that prioritize reliability over experimentation.

Why Enterprises Choose Closed-Source Large Language Models

Many organizations prefer closed-source AI due to operational advantages.

Faster Deployment

Companies can integrate AI features without building models from scratch.

Reduced Infrastructure Complexity

Managed services eliminate the need to maintain GPU clusters.

Reliable Performance

Providers handle scaling, updates, and optimization.

Enterprise Support

Documentation, monitoring tools, and SLAs improve reliability.

Real-World Deployment Example

Imagine a SaaS company implementing AI-powered customer support.

Instead of training its own large language model, the company:

- Uses a closed-source model through API access

- Designs prompts tailored to support workflows

- Deploys inference workloads on GPU cloud storage platforms powered by Nvidia A100 hardware

The result:

- faster rollout

- scalable performance

- minimal infrastructure overhead

Role of GPU Infrastructure in Large Language Models

Infrastructure plays a critical role in AI success.

Modern large language model systems depend on GPU acceleration because they:

- process billions of parameters

- require parallel computation

- demand high memory bandwidth

Hardware like Nvidia H100 significantly improves efficiency for enterprise AI workloads.

Platforms like inhosted.ai provide scalable access to these resources, helping organizations deploy AI faster.

Key Considerations Before Choosing Closed Source AI

Before selecting a closed-source large language model, consider:

- data privacy requirements

- scalability needs

- cost predictability

- latency expectations

- integration flexibility

Strategic evaluation ensures the chosen solution aligns with business goals.

FAQs

- What is the main characteristic of closed-source large language models?

The primary characteristic is proprietary control, meaning internal architecture and training data remain private.

- Can developers modify closed-source models?

Deep modifications are usually not allowed; customization happens through prompts or APIs.

- Why are GPUs important for large language models?

GPUs like Nvidia A100 and Nvidia H100 provide the computational power needed for efficient AI inference.

- Are closed-source models better than open-source?

Not necessarily—they are typically easier to deploy but offer less customization.

- Who should use closed-source AI?

Businesses seeking fast deployment, scalability, and managed infrastructure often benefit most.

Final Conclusion

The defining feature of a closed-source system is proprietary control over the large language model, making it ideal for organizations that need reliable performance, scalable infrastructure, and enterprise-ready AI deployment.