The NVIDIA H100 price in India starts from ₹249/hour on cloud platforms, based on real-time pricing from Inhosted.ai.

For businesses considering ownership, the estimated purchase cost ranges between ₹25 lakh to ₹40 lakh, depending on configuration and availability.

- Cloud (on-demand): ₹249/hour

- Purchase (estimated market price): ₹25–40 lakh

If you’re planning AI or LLM training in India, this cost plays a critical role in your infrastructure decisions.

Why NVIDIA H100 Pricing Matters for AI Projects

If you’re building AI models, training LLMs, or scaling inference workloads, GPU costs are not just a number; they directly impact:

- total project budget

- training speed

- scalability

Even a small pricing difference can lead to lakhs of rupees in monthly cost variation

NVIDIA H100 Price in India (Detailed Breakdown)

Cloud pricing mentioned above is based on real-time GPU rates from Inhosted.ai

NVIDIA H100 Cloud Price in India (Real Data)

The most practical way to use NVIDIA H100 in India is through cloud deployment.

Key Pricing Insight:

- Standard cloud usage: ₹249/hour

- Scalable GPU clusters: custom pricing

Why Cloud is Preferred:

- No upfront investment

- Instant deployment

- Easy scalability

This makes cloud GPUs ideal for startups and growing AI teams.

Monthly Cost of NVIDIA H100 in India

Let’s calculate the actual cost:

- ₹249/hour × 24 × 30

≈ ₹1.79 lakh per month (per GPU)

Real Scenario:

- 4 GPUs → ₹7+ lakh/month

- 8 GPUs → ₹14+ lakh/month

This is why planning GPU usage is critical for cost optimization.

Why NVIDIA H100 is Expensive in India

1. Import Costs

H100 GPUs are imported, increasing the overall price.

2. High Demand

AI adoption has created massive demand for high-performance GPUs.

3. Infrastructure Requirements

Running H100 requires the following:

- high power consumption

- advanced cooling systems

- data center-grade setup

These factors increase total cost beyond just the GPU price.

Buy vs. Rent: What Should You Choose?

Cloud (Best for Most Users)

- ₹249/hour usage-based pricing

- zero upfront investment

- scalable infrastructure

Ideal for:

- startups

- AI developers

- LLM experimentation

Purchase (Enterprise Level)

- ₹25–40 lakh upfront investment

- long-term usage advantage

- full control

Ideal for:

- large organizations

- continuous workloads

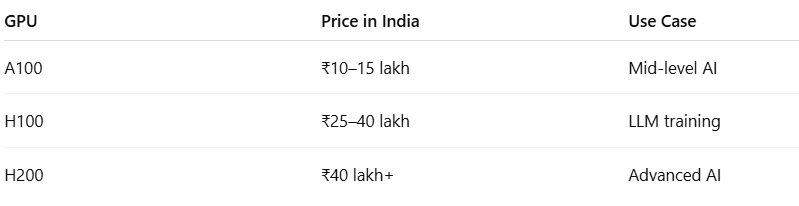

H100 vs Other GPUs (Price Perspective)

H100 provides the best balance of performance and cost for modern AI workloads

Who Should Use NVIDIA H100?

NVIDIA H100 is ideal for:

- training large language models (LLMs)

- AI startups scaling quickly

- enterprise AI workloads

- high-performance computing

FAQs: NVIDIA H100 Price & Usage in India

1. What is the NVIDIA H100 price in India?

It starts from ₹249/hour on cloud platforms and ₹25–40 lakh for purchase.

2. What is the monthly cost of H100 in India?

Approximately ₹1.79 lakh/month per GPU based on ₹249/hour usage.

3. Why is NVIDIA H100 expensive?

The price is due to import costs, high demand, and infrastructure requirements.

4. Is the cloud better than buying an H100?

For most users, yes, it offers flexibility and a lower upfront cost.

5. How is cloud storage different from traditional cloud storage solutions?

10PB is designed for businesses that need secure, scalable, and cost-predictable cloud storage, not just a place to dump files. Unlike traditional cloud storage that often becomes expensive as data grows, 10PB focuses on large-scale data protection, backup, and recovery with transparent pricing and enterprise-grade reliability.

6. What is really the rationale of H100?

Passive deep learning, machine learning generation, and high-performance inference.

7. Are there alternatives to H100?

Yes. Most of the workloads are efficient on other data center GPUs or cloud instances.

8. Is H100 a long-term investment?

The H100 is a multi-year enterprise, specifically designed for future use in AI applications.

Conclusion:

You are already at no small scale of thinking in case you are looking at the NVIDIA H100 price in India.

It is not about the quest to have the most powerful GPU but the correct infrastructure to the area your AI process is in.