Speed is no longer an option it’s the difference between leading the market and falling behind. From AI models to real-time analytics, modern workloads demand serious performance. This is where the debate of GPU vs. CPU becomes critical.

If you have noticed applications running dramatically faster on GPUs, it’s not magic it’s architecture. And once you understand it, the shift toward GPU server infrastructure makes complete sense.

What is the difference between a GPU and a CPU?

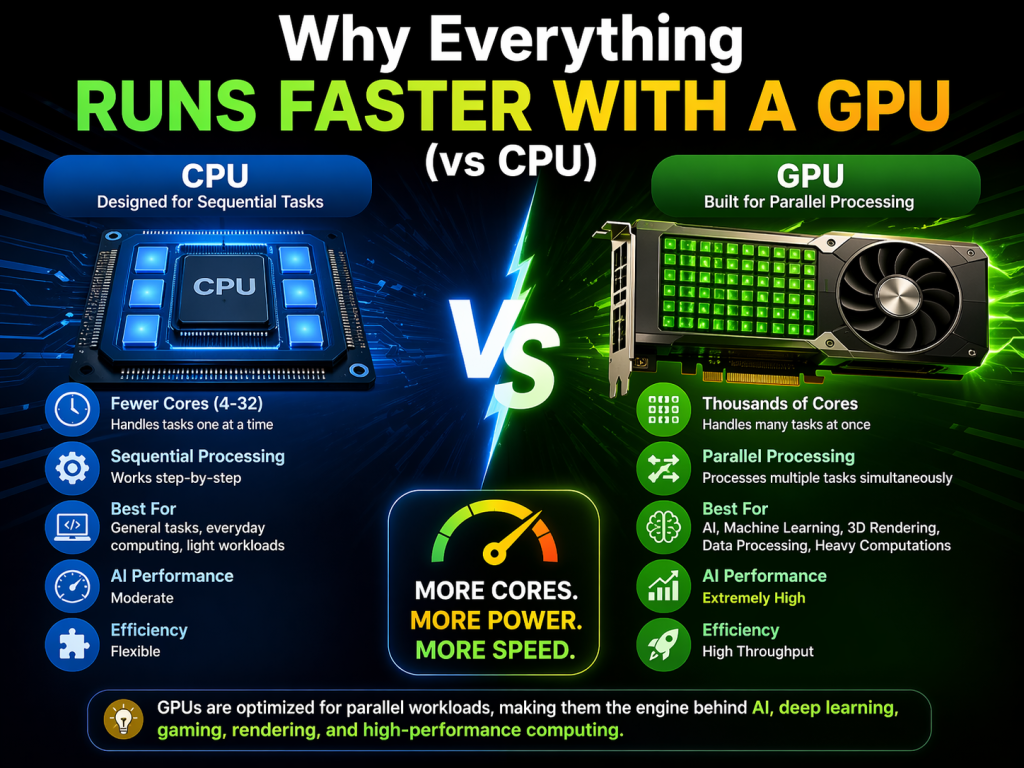

At the core, the CPU vs. GPU difference lies in how they process tasks.

A CPU is designed for general-purpose computing. It handles the tasks one at a time with a high precision. On the other hand, a GPU is built for parallel processing, meaning that it can handle thousands of operations simultaneously.

This fundamental about GPU vs CPU is designed for different purposes. Is that why GPUs dominate in AI, cloud computing, and big data environments?

GPU vs. CPU Performance Comparison: Why Are GPUs Faster?

Let’s simplify this with a real-world example.

Think about processing 1 million data points:

- A CPU handles tasks step by step.

- A GPU processes thousands of tasks at the same time.

That is where the real performance gap between GPUs and CPUs becomes clear.

The real business scenarios:

- With CPU, training an AI model can take anywhere from 8 to 12 hours.

- With GPU, the same job is often complete in just 20 to 40 minutes.

That is up to 10x faster performance, which is why enterprises are rapidly shifting toward the GPU infrastructure. What this really means for businesses is faster results, lower delays, and better overall efficiency.

CPU vs. GPU architecture: Where the real shift happens.

The real difference is not just speed, it’s design. This is exactly where the CPU vs. GPU difference becomes clear, especially in how each architecture is designed to handle performance at scale.

Understanding CPU vs. GPU architecture explains everything.

CPU Architecture:

- Few powerful cores.

- Designed for complex, varied tasks.

- High control and flexibility.

GPU Architecture:

1. Thousands of smaller cores.

- Designed for repetitive tasks.

- Built for high throughput.

This is exactly where GPUs outperform CPUs, especially in AI training, simulations, and rendering workloads.

CPU Core vs GPU Core: Power vs Parallelism.

Let’s break down CPU core vs. GPU core in a way that actually makes sense.

- CPU cores are powerful and versatile.

- GPU cores are simpler but massively parallel.

For easy understanding, a CPU is a few experts solving different platforms, whereas a GPU is thousands of workers solving one problem faster.

GPU vs. CPU Performance Comparison.

| Feature | CPU | GPU |

|---|---|---|

| Processing style | Sequential | Parallel |

| Cores | 4-32 | Thousands |

| Best for | General tasks | Heavy Computations |

| AI performance | Moderate | Extremely High |

| Efficiency | Flexible | High Throughput |

This GPU and CPU speed comparison clearly shows why GPUs are preferred for performance-heavy workloads.

The Real-World Use Cases: Where GPU Wins.

The impact of GPU vs. CPU becomes obvious when applied in real applications:

- AI and machine learning: Training AI learning models require massive computation. GPUs reduce training time from days to hours.

- Video rendering and media: Editing 4k/8k video becomes significantly faster with GPU acceleration.

- Cloud computing: Platforms like InHosted use GPU-powered infrastructure to deliver faster, scalable computing for businesses.

- Big data and analysis: Processing large datasets becomes efficient with parallel execution.

This is where GPU vs. CPU computing truly transforms business operations.

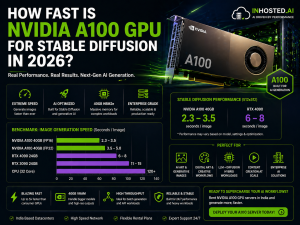

NVIDIA GPU vs. CPU: Why the Industries Are Shifting.

When we compare NVIDIA GPU performance to CPUs, we are really looking at where the future of computing is headed.

NVIDIA GPUs are used for tasks like the following:

- AI workloads.

- Machine learning.

- Advanced computing power.

It’s delivering the unmatched parallel processing power, making them the go-to choice for enterprises, startups, and cloud storage providers. This clearly shows that GPUs are no longer optional, the have become the most important.

When to Use GPU vs. CPU for Your Workload?

The choice between GPU vs. CPU is not about good or bad, it is about selecting the right fit.

When to use CPU:

- Running web servers.

- Managing databases.

- Handling simple applications.

When to use GPU:

- Training AI models.

- Running simulations.

- Processing large-scale data.

Why Businesses Are Moving to GPU Infrastructure.

The shift toward GPUs is not a trend, it is a necessity.

Here is the reason:

- Achieving faster processing speed.

- Better growth capability.

- Made for AI works.

- It reduced time.

With modern providers like Inhosted offering GPU-powered environments, businesses can now access heavy workloads without building complex infrastructure.

Why GPUs Are Powering the Future of Cloud.

GPUs do not just deliver a speed, they unlock tasks that CPUs simply cannot handle efficiently. From AI work to real-time analytics, GPUs are popular because of the following:

- Quick processing.

- Cost savings.

- It supports seamless scaling.

This is exactly where the leading cloud providers prioritize GPU infrastructure in 2026 and beyond.

Conclusion: The Future Belongs to GPUs.

The debate around GPUs vs CPUs is no longer about which is better, it is about choosing what truly fits your workload.

- CPUs remain important for control and general tasks.

- GPUs dominate in speed, scalability, and performance.

Understanding the GPU vs. CPU performance comparison and the CPU vs GPU difference helps businesses make smarter decisions and stay competitive. A GPU is not just an upgrade, it’s your advantage.