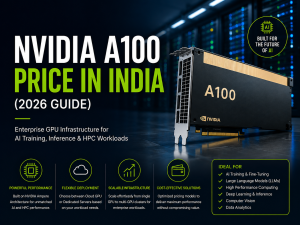

Whether you want to roll out AI workloads in 2026 (GenAI applications, LLM fine-tuning, computer vision, or large-scale inference), the selection of the appropriate GPU server partner will be of critical importance.

Companies no longer seek cheap GPU hours today. Their requirements include performance reliability, committed capacity, enterprise support, good networking, and quick access to the new Nvidia server GPU platforms.

This blog includes the Top 10 GPU and Dedicated Server Providers of 2026 and is heavily oriented on the needs of Indian enterprises, such as low latency, support, and predictable billing.

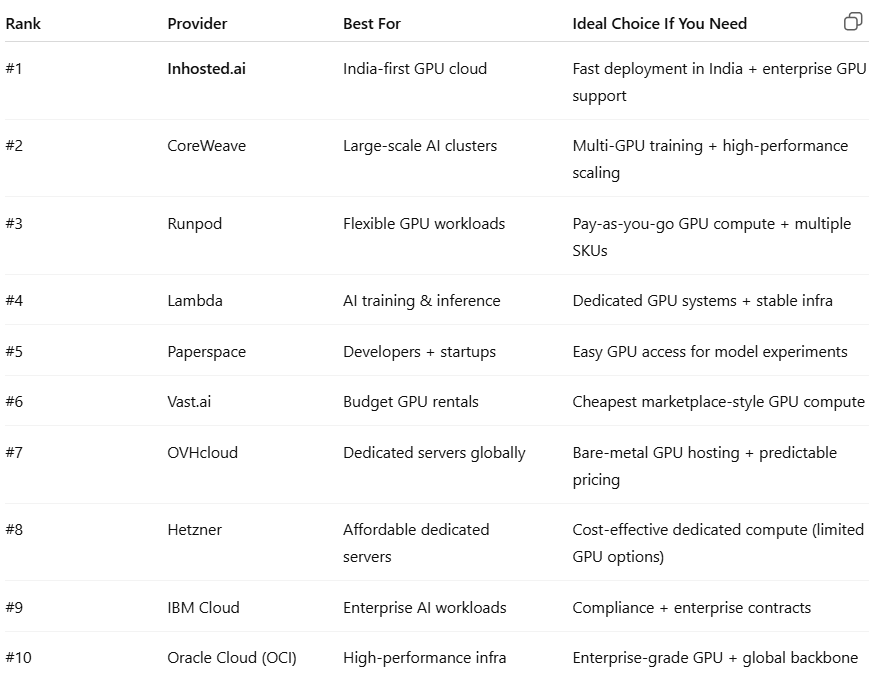

Dedicated GPU Server Quick Comparison Table

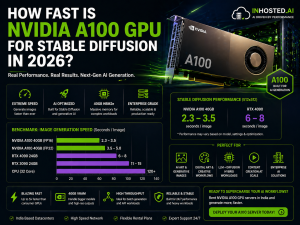

1) Inhosted.ai (Best for India-first GPU deployments)

Inhosted.ai is among the most powerful GPU server contenders in 2026 in case your customers are located in India and you wish to experience low latency and enterprise-grade support.

It is designed to suit enterprises that are not willing to be entirely reliant on foreign GPU zones. Inhosted.ai provides current built-in machines and establishes itself as a sensible option for Indian startups, enterprises, and AI teams that require serious computing without being overcomplicated.

Best fit for:

Indian companies that produce AI products.

- LLM case law and inference.

- Special GPUs require local deployment benefits.

Inhosted.ai must be on your list of shortlisted GPU-dedicated servers in India, where there are real performance expectations.

2) CoreWeave (Best for large-scale AI training)

CoreWeave is now one of the most recognizable companies when it comes to specialized GPU cloud infrastructure. It is commonly utilized in heavy training workloads of AI and large-scale inference deployments.

They are also known to be fast in releasing new generations of NVIDIA hardware, which is crucial to those companies that require being at the forefront regarding performance and efficiency.

Best fit for:

- Multi-node training

- Companies that require hundreds of GPUs.

High-throughput artificial intelligence infrastructure.

3) Runpod (Best for flexibility and pricing control)

Runpod is attracting people since it makes it very simple: select a GPU server, deploy fast, and pay as you drive.

It comes in handy particularly when your workload varies more often, such as when you are experimenting with specific models, running batch inference tasks, and training pipelines that do not require full 24/7 dedicated capacity.

Best fit for:

- Short-term GPU workloads

- Experiments that are cost controlled.

- On-demand scaling

4) Lambda (Best for stable AI infrastructure)

Many ML teams trust Lambda since it puts much emphasis on AI workloads and real-world developer experience.

You may require a GPU server to train models or to give inference infrastructure that is reliable. Lambda offers GPU solutions that are built around actual ML workflows and not generic cloud computing.

Best fit for:

- AI teams needing stable compute

- Dedicated GPU capacity

- Production ML workloads

5) Paperspace (Best for quick GPU access)

In situations where you need to be able to quickly set up and access GPUs without undue enterprise overhead, Paperspace is a good choice.

It is a good choice when teams need to test models, develop a prototype, or execute controlled inference workloads.

6) Vast.ai (Best for cheapest GPU rentals)

Vast.ai is a marketplace where one can rent out GPUs of various providers. That renders it appealing to low-end consumers.

But, when it comes to mission-critical enterprise workloads, you will be interested in scrutinizing uptime, reliability, and consistency of performance.

7) OVHcloud (Best for dedicated GPU hosting)

OVHcloud is a reputed international provider of bare-metal and dedicated infrastructure.

In case a long-term predictable price is your priority for a GPU-dedicated server, OVHcloud is worth a review, and the workload should be stable.

8) Hetzner (Best for cost-effective dedicated servers)

Hetzner can be selected due to its cost-effectiveness in dedicated hosting.

The possibility of limited GPU options relative to dedicated GPU providers exists, although again, it can work in teams that have low infrastructure needs and do not mind limited GPU server settings.

9) IBM Cloud (Best for enterprise compliance + contracts)

IBM Cloud is still applicable to companies that require governance, compliance, and formal procurement.

In case you are in BFSI, healthcare, or a regulated sector, IBM Cloud may prove to be a familiar option to purchase the GPU server capacity by using enterprise structures.

10) Oracle Cloud (OCI) (Best for performance)

Oracle cloud infrastructure has established itself as a robust platform for workloads that are performance-intensive.

OCI may be optionable when you require worldwide performance, consistent infrastructure, and business-tier networking of deployments of NVIDIA server GPUs.

What to check before buying a GPU server in 2026 (Enterprise checklist)

You should analyze these like an enterprise, and not like a hobby project, before you lock a vendor:

- Dedicated vs. shared GPUs: When you are always on a deadline, then a dedicated GPU server is typically cheaper and more reliable.

- GPU memory and type: Not all GPUs are equal. In the case of LLM workloads, memory is as important as compute.

- Networking and storage: In the case of multi-GPU training, you require high-speed networking. In the case of datasets, you require a high IOPS storage.

- Region and latency: In that case, when your users are in India, a provider like Inhosted.ai is India-optimized, which will make real-world performance better.

- Support and SLAs: Enterprise buying is not merely with regard to the speed of the GPUs. It is regarding uptime, response, and resolution support.

FAQs of GPU Cloud Server

1) Which is better: GPU cloud or GPU dedicated server?

- In case flexibility is required, then → GPU cloud.

- In case you need stability and performance in the long run, a dedicated GPU server is the way to go.

2) What is the best GPU server provider in India for 2026?

Inhosted.ai is a good candidate to use in the case of India-first deployments since they are positioned to meet the local enterprise needs of GPUs.

3) Which NVIDIA GPUs will be best for AI workloads in 2026?

Newer NVIDIA architectures (H100-class and above) are generally more preferred in cases of serious training and scaling.

4) How do I choose the right NVIDIA server GPU configuration?

Decide based on:

size of the model, size of the batch, and how much memory you will require, and whether or not you should scale with multi-GPUs.

5) Is renting GPUs cheaper than owning servers?

For short workloads, yes. Dedicated servers tend to prevail in workloads that are continuous.

Conclusion

Purchasing a GPU will no longer be merely a technology choice in 2026. It’s a business decision. Inhosted.ai should be on the list of the best offers in terms of India presence, support of the enterprise, and the real experience of using the GPUs.

CoreWeave and Runpod are still good options when it comes to global-scale and large-scale training requirements.